在AI工具包中的追踪

AI 工具包提供了跟踪功能,帮助您监控和分析 AI 应用程序的性能。您可以跟踪 AI 应用程序的执行情况,包括与生成型 AI 模型的交互,以了解其行为和性能。

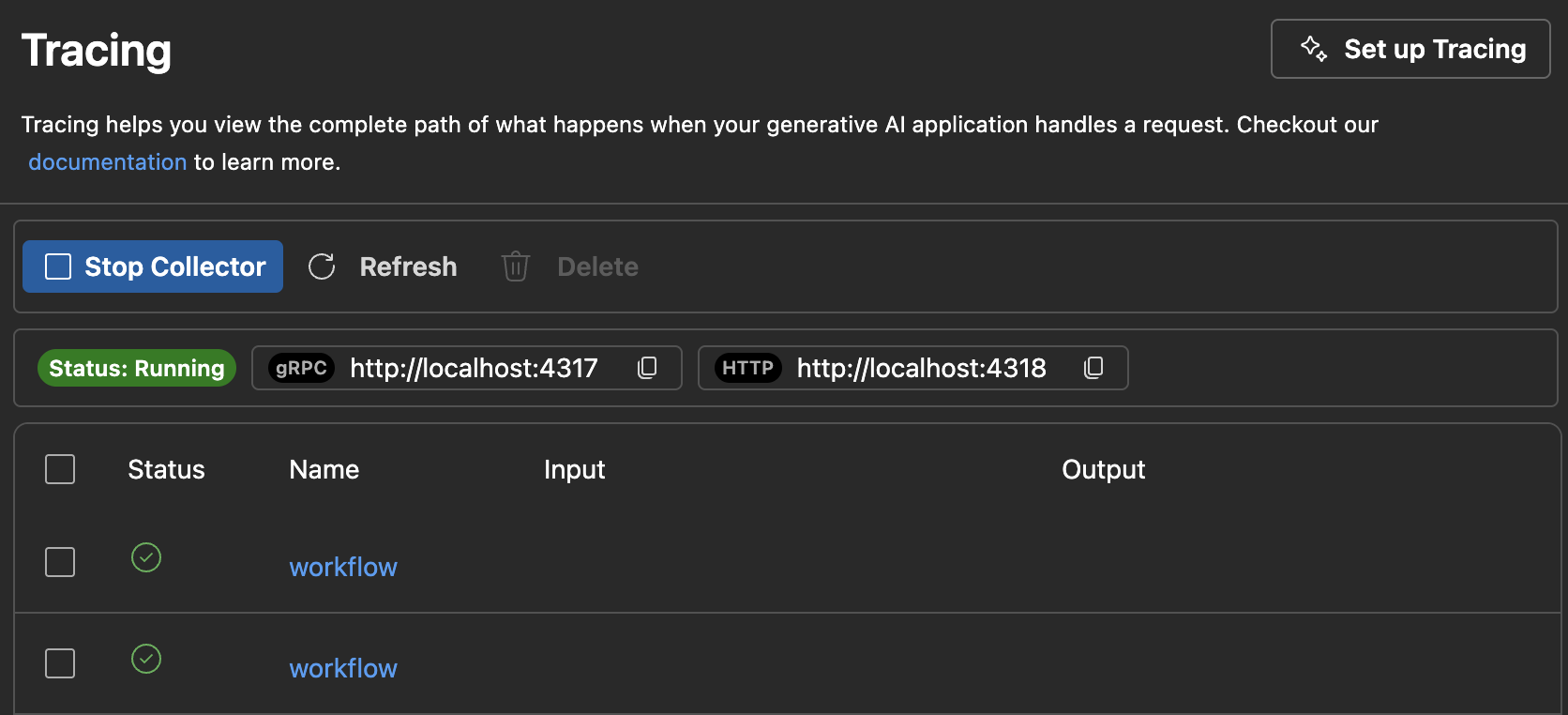

AI Toolkit 建立一个本地的 HTTP 和 gRPC 服务器来收集跟踪数据。收集器服务器兼容 OTLP(OpenTelemetry 协议)和大多数语言模型 SDK,要么直接支持 OTLP,要么有非 Microsoft 仪器库来支持它。使用 AI Toolkit 来可视化收集到的仪器数据。

所有支持OTLP并且遵循生成式AI系统语义规范的框架或SDK都支持。下表包含经过兼容性测试的常用AI SDK。

如何开始跟踪

-

通过选择跟踪在树视图中打开跟踪网页视图。

-

选择启动收集器按钮以启动本地OTLP跟踪收集器服务器。

-

使用代码片段启用仪器化。请参阅设置仪器化部分,了解不同语言和SDK的代码片段。

-

通过运行您的应用程序生成跟踪数据。

-

在跟踪网页视图中,选择刷新按钮以查看新的跟踪数据。

设置仪器

在你的AI应用程序中设置跟踪以收集跟踪数据。以下代码片段展示了如何为不同的SDK和语言设置跟踪:

对于所有SDK,过程是类似的:

- 为您的LLM或代理应用添加跟踪。

- 设置OTLP跟踪导出器以使用AITK本地收集器。

Azure AI 推理 SDK - Python

安装:

pip install opentelemetry-sdk opentelemetry-exporter-otlp-proto-http azure-ai-inference[opentelemetry]

Setup:

import os

os.environ["AZURE_TRACING_GEN_AI_CONTENT_RECORDING_ENABLED"] = "true"

os.environ["AZURE_SDK_TRACING_IMPLEMENTATION"] = "opentelemetry"

from opentelemetry import trace, _events

from opentelemetry.sdk.resources import Resource

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.sdk._logs import LoggerProvider

from opentelemetry.sdk._logs.export import BatchLogRecordProcessor

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

from opentelemetry.sdk._events import EventLoggerProvider

from opentelemetry.exporter.otlp.proto.http._log_exporter import OTLPLogExporter

resource = Resource(attributes={

"service.name": "opentelemetry-instrumentation-azure-ai-agents"

})

provider = TracerProvider(resource=resource)

otlp_exporter = OTLPSpanExporter(

endpoint="http://localhost:4318/v1/traces",

)

processor = BatchSpanProcessor(otlp_exporter)

provider.add_span_processor(processor)

trace.set_tracer_provider(provider)

logger_provider = LoggerProvider(resource=resource)

logger_provider.add_log_record_processor(

BatchLogRecordProcessor(OTLPLogExporter(endpoint="http://localhost:4318/v1/logs"))

)

_events.set_event_logger_provider(EventLoggerProvider(logger_provider))

从 azure.ai.inference.tracing 导入 AIInferenceInstrumentor

AIInferenceInstrumentor().instrument(True)

Azure AI 推理 SDK - TypeScript/JavaScript

安装:

npm install @azure/opentelemetry-instrumentation-azure-sdk @opentelemetry/api @opentelemetry/exporter-trace-otlp-proto @opentelemetry/instrumentation @opentelemetry/resources @opentelemetry/sdk-trace-node

Setup:

const { context } = require('@opentelemetry/api');

const { resourceFromAttributes } = require('@opentelemetry/resources');

const {

NodeTracerProvider,

SimpleSpanProcessor

} = require('@opentelemetry/sdk-trace-node');

const { OTLPTraceExporter } = require('@opentelemetry/exporter-trace-otlp-proto');

const exporter = new OTLPTraceExporter({

url: 'http://localhost:4318/v1/traces'

});

const provider = new NodeTracerProvider({

resource: resourceFromAttributes({

'service.name': 'opentelemetry-instrumentation-azure-ai-inference'

}),

spanProcessors: [new SimpleSpanProcessor(exporter)]

});

provider.register();

```plaintext

const { registerInstrumentations } = require('@opentelemetry/instrumentation');

const { createAzureSdkInstrumentation } = require('@azure/opentelemetry-instrumentation-azure-sdk');

```

注册仪器({

仪器: [创建AzureSdk仪器()]

});

铸造代理服务 - Python

安装:

pip install opentelemetry-sdk opentelemetry-exporter-otlp-proto-http azure-ai-inference[opentelemetry]

设置:

import os

os.environ["AZURE_TRACING_GEN_AI_CONTENT_RECORDING_ENABLED"] = "true"

os.environ["AZURE_SDK_TRACING_IMPLEMENTATION"] = "opentelemetry"

from opentelemetry import trace, _events

from opentelemetry.sdk.resources import Resource

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.sdk._logs import LoggerProvider

from opentelemetry.sdk._logs.export import BatchLogRecordProcessor

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

from opentelemetry.sdk._events import EventLoggerProvider

from opentelemetry.exporter.otlp.proto.http._log_exporter import OTLPLogExporter

resource = Resource(attributes={

"service.name": "opentelemetry-instrumentation-azure-ai-agents"

})

provider = TracerProvider(resource=resource)

otlp_exporter = OTLPSpanExporter(

endpoint="http://localhost:4318/v1/traces",

)

processor = BatchSpanProcessor(otlp_exporter)

provider.add_span_processor(processor)

trace.set_tracer_provider(provider)

logger_provider = LoggerProvider(resource=resource)

logger_provider.add_log_record_processor(

BatchLogRecordProcessor(OTLPLogExporter(endpoint="http://localhost:4318/v1/logs"))

)

_events.set_event_logger_provider(EventLoggerProvider(logger_provider))

从 azure.ai.agents.telemetry 导入 AIAgentsInstrumentor

AIAgentsInstrumentor().instrument(True)

铸造代理服务 - TypeScript/JavaScript

安装:

npm install @azure/opentelemetry-instrumentation-azure-sdk @opentelemetry/api @opentelemetry/exporter-trace-otlp-proto @opentelemetry/instrumentation @opentelemetry/resources @opentelemetry/sdk-trace-node

Setup:

const { context } = require('@opentelemetry/api');

const { resourceFromAttributes } = require('@opentelemetry/resources');

const {

NodeTracerProvider,

SimpleSpanProcessor

} = require('@opentelemetry/sdk-trace-node');

const { OTLPTraceExporter } = require('@opentelemetry/exporter-trace-otlp-proto');

const exporter = new OTLPTraceExporter({

url: 'http://localhost:4318/v1/traces'

});

const provider = new NodeTracerProvider({

resource: resourceFromAttributes({

'service.name': 'opentelemetry-instrumentation-azure-ai-inference'

}),

spanProcessors: [new SimpleSpanProcessor(exporter)]

});

provider.register();

```plaintext

const { registerInstrumentations } = require('@opentelemetry/instrumentation');

const { createAzureSdkInstrumentation } = require('@azure/opentelemetry-instrumentation-azure-sdk');

```

注册仪器({

仪器: [创建AzureSdk仪器()]

});

人类学 - Python

开放可观测性

安装:

pip install opentelemetry-sdk opentelemetry-exporter-otlp-proto-http opentelemetry-instrumentation-anthropic

设置:

from opentelemetry import trace, _events

from opentelemetry.sdk.resources import Resource

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.sdk._logs import LoggerProvider

from opentelemetry.sdk._logs.export import BatchLogRecordProcessor

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

from opentelemetry.sdk._events import EventLoggerProvider

from opentelemetry.exporter.otlp.proto.http._log_exporter import OTLPLogExporter

resource = Resource(attributes={

"service.name": "opentelemetry-instrumentation-anthropic-traceloop"

})

provider = TracerProvider(resource=resource)

otlp_exporter = OTLPSpanExporter(

endpoint="http://localhost:4318/v1/traces",

)

processor = BatchSpanProcessor(otlp_exporter)

provider.add_span_processor(processor)

trace.set_tracer_provider(provider)

logger_provider = LoggerProvider(resource=resource)

logger_provider.add_log_record_processor(

BatchLogRecordProcessor(OTLPLogExporter(endpoint="http://localhost:4318/v1/logs"))

)

_events.set_event_logger_provider(EventLoggerProvider(logger_provider))

from opentelemetry.instrumentation.anthropic import AnthropicInstrumentor

AnthropicInstrumentor().instrument()

Monocle

Installation:

pip install opentelemetry-sdk opentelemetry-exporter-otlp-proto-http monocle_apptrace

Setup:

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

# Import monocle_apptrace

from monocle_apptrace import setup_monocle_telemetry

# Setup Monocle telemetry with OTLP span exporter for traces

setup_monocle_telemetry(

workflow_name="opentelemetry-instrumentation-anthropic",

span_processors=[

BatchSpanProcessor(

OTLPSpanExporter(endpoint="http://localhost:4318/v1/traces")

)

]

)

人类 - TypeScript/JavaScript

安装:

npm install @traceloop/node-server-sdk

设置:

常量 { 初始化 } = 需要('@traceloop/node-server-sdk');

常量 { 跟踪 } = 需要('@opentelemetry/api');

初始化({

应用名称: 'opentelemetry-instrumentation-anthropic-traceloop',

基础URL: 'http://localhost:4318',

禁用批量: true

});

谷歌双子座 - Python

开放可观测性

安装:

pip install opentelemetry-sdk opentelemetry-exporter-otlp-proto-http opentelemetry-instrumentation-google-genai

Setup:

from opentelemetry import trace, _events

from opentelemetry.sdk.resources import Resource

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.sdk._logs import LoggerProvider

from opentelemetry.sdk._logs.export import BatchLogRecordProcessor

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

from opentelemetry.sdk._events import EventLoggerProvider

from opentelemetry.exporter.otlp.proto.http._log_exporter import OTLPLogExporter

resource = Resource(attributes={

"service.name": "opentelemetry-instrumentation-google-genai"

})

provider = TracerProvider(resource=resource)

otlp_exporter = OTLPSpanExporter(

endpoint="http://localhost:4318/v1/traces",

)

processor = BatchSpanProcessor(otlp_exporter)

provider.add_span_processor(processor)

trace.set_tracer_provider(provider)

logger_provider = LoggerProvider(resource=resource)

logger_provider.add_log_record_processor(

BatchLogRecordProcessor(OTLPLogExporter(endpoint="http://localhost:4318/v1/logs"))

)

_events.set_event_logger_provider(EventLoggerProvider(logger_provider))

from opentelemetry.instrumentation.google_genai import GoogleGenAiSdkInstrumentor

GoogleGenAiSdkInstrumentor().instrument(enable_content_recording=True)

Monocle

Installation:

pip install opentelemetry-sdk opentelemetry-exporter-otlp-proto-http monocle_apptrace

Setup:

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

# Import monocle_apptrace

from monocle_apptrace import setup_monocle_telemetry

# Setup Monocle telemetry with OTLP span exporter for traces

setup_monocle_telemetry(

workflow_name="opentelemetry-instrumentation-google-genai",

span_processors=[

BatchSpanProcessor(

OTLPSpanExporter(endpoint="http://localhost:4318/v1/traces")

)

]

)

LangChain - Python

兰格·史密斯

安装:

pip 安装 langsmith[otel]

设置:

导入 os

os.environ["LANGSMITH_OTEL_ENABLED"] = "true"

os.environ["LANGSMITH_TRACING"] = "true"

os.environ["OTEL_EXPORTER_OTLP_ENDPOINT"] = "http://localhost:4318"

单眼镜

安装:

pip 安装 opentelemetry-sdk opentelemetry-exporter-otlp-proto-http monocle_apptrace

设置:

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

# Import monocle_apptrace

from monocle_apptrace import setup_monocle_telemetry

# Setup Monocle telemetry with OTLP span exporter for traces

setup_monocle_telemetry(

workflow_name="opentelemetry-instrumentation-langchain",

span_processors=[

BatchSpanProcessor(

OTLPSpanExporter(endpoint="http://localhost:4318/v1/traces")

)

]

)

LangChain - TypeScript/JavaScript

安装:

npm install @traceloop/node-server-sdk

设置:

常量 { 初始化 } = 需要('@traceloop/node-server-sdk');

初始化({

应用名称:'opentelemetry-instrumentation-langchain-traceloop',

基础URL:'http://localhost:4318',

禁用批量: true

});

OpenAI - Python

开放可观测性

安装:

pip install opentelemetry-sdk opentelemetry-exporter-otlp-proto-http opentelemetry-instrumentation-openai-v2

设置:

from opentelemetry import trace, _events

from opentelemetry.sdk.resources import Resource

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.sdk._logs import LoggerProvider

from opentelemetry.sdk._logs.export import BatchLogRecordProcessor

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

from opentelemetry.sdk._events import EventLoggerProvider

from opentelemetry.exporter.otlp.proto.http._log_exporter import OTLPLogExporter

from opentelemetry.instrumentation.openai_v2 import OpenAIInstrumentor

import os

os.environ["OTEL_INSTRUMENTATION_GENAI_CAPTURE_MESSAGE_CONTENT"] = "true"

# Set up resource

resource = Resource(attributes={

"service.name": "opentelemetry-instrumentation-openai"

})

# Create tracer provider

trace.set_tracer_provider(TracerProvider(resource=resource))

# Configure OTLP exporter

otlp_exporter = OTLPSpanExporter(

endpoint="http://localhost:4318/v1/traces"

)

# Add span processor

trace.get_tracer_provider().add_span_processor(

BatchSpanProcessor(otlp_exporter)

)

# Set up logger provider

logger_provider = LoggerProvider(resource=resource)

logger_provider.add_log_record_processor(

BatchLogRecordProcessor(OTLPLogExporter(endpoint="http://localhost:4318/v1/logs"))

)

_events.set_event_logger_provider(EventLoggerProvider(logger_provider))

# Enable OpenAI instrumentation

OpenAIInstrumentor().instrument()

Monocle

Installation:

pip install opentelemetry-sdk opentelemetry-exporter-otlp-proto-http monocle_apptrace

Setup:

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

# Import monocle_apptrace

from monocle_apptrace import setup_monocle_telemetry

# 使用OTLP span导出器为跟踪设置Monocle遥测

setup_monocle_telemetry(

workflow_name="opentelemetry-instrumentation-openai",

span_processors=[

BatchSpanProcessor(

OTLPSpanExporter(endpoint="http://localhost:4318/v1/traces")

)

]

)

OpenAI - TypeScript/JavaScript

安装:

npm install @traceloop/instrumentation-openai @traceloop/node-server-sdk

设置:

常量 { 初始化 } = 需要('@traceloop/node-server-sdk');

初始化({

应用名称:'opentelemetry-instrumentation-openai-traceloop',

基础URL:'http://localhost:4318',

禁用批量: true

});

OpenAI Agents SDK - Python

日志火焰

安装:

pip 安装 logfire

设置:

导入 日志火

导入 操作系统

os.environ['OTEL_EXPORTER_OTLP_TRACES_ENDPOINT'] = 'http://localhost:4318/v1/traces'

logfire.configure(

service_name="opentelemetry-instrumentation-openai-agents-logfire",

send_to_logfire=False,

)

logfire.instrument_openai_agents()

Monocle

Installation:

pip install opentelemetry-sdk opentelemetry-exporter-otlp-proto-http monocle_apptrace

Setup:

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

# Import monocle_apptrace

from monocle_apptrace import setup_monocle_telemetry

# 使用OTLP span导出器为跟踪设置Monocle遥测

setup_monocle_telemetry(

workflow_name="opentelemetry-instrumentation-openai-agents",

span_processors=[

BatchSpanProcessor(

OTLPSpanExporter(endpoint="http://localhost:4318/v1/traces")

)

]

)

示例 1:使用 OpenTelemetry 通过 Azure AI 推理 SDK 设置跟踪

以下端到端示例使用了Python中的Azure AI推理SDK,并展示了如何设置跟踪提供程序和仪器。

先决条件

要运行此示例,您需要以下前提条件:

设置开发环境

请按照以下说明部署包含所有运行此示例所需依赖项的预配置开发环境。

-

设置 GitHub 个人访问令牌

使用免费的GitHub 模型作为示例模型。

打开 GitHub 开发者设置 并选择 生成新令牌.

重要模型:读取需要权限,否则将返回未经授权。令牌将发送到Microsoft服务。 -

创建环境变量

创建一个环境变量来设置您的令牌作为客户端代码的密钥,使用以下代码片段之一。替换

<你的GitHub令牌放在这里>使用您的实际 GitHub 令牌。bash:

导出 GITHUB_TOKEN="<你的-github-token-放在这里>"PowerShell:

$Env:GITHUB_TOKEN="<你的-github-token-放在这里>"Windows 命令提示符:

设置 GITHUB_TOKEN=<你的-github-令牌-放置-这里> -

安装Python包

以下命令使用 Azure AI 推理 SDK 安装用于跟踪所需的 Python 包:

pip install opentelemetry-sdk opentelemetry-exporter-otlp-proto-http azure-ai-inference[opentelemetry] -

设置跟踪

-

在你的电脑上为项目创建一个新的本地目录。

创建目录 my-tracing-app -

导航到你创建的目录。

cd 我的追踪应用 -

在该目录中打开Visual Studio Code:

代码 .

-

-

创建Python文件

-

在

我的追踪应用程序目录,创建一个名为的Python文件main.py输入:.您将添加代码以设置跟踪并与 Azure AI 推理 SDK 进行交互。

-

将以下代码添加到

main.py并保存文件:导入 os ### 设置 OpenTelemetry 用于跟踪 ### os.environ["AZURE_TRACING_GEN_AI_CONTENT_RECORDING_ENABLED"] = "true" os.environ["AZURE_SDK_TRACING_IMPLEMENTATION"] = "opentelemetry" from opentelemetry import trace, _events from opentelemetry.sdk.resources import Resource from opentelemetry.sdk.trace import TracerProvider from opentelemetry.sdk.trace.export import BatchSpanProcessor from opentelemetry.sdk._logs import LoggerProvider from opentelemetry.sdk._logs.export import BatchLogRecordProcessor from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter from opentelemetry.sdk._events import EventLoggerProvider from opentelemetry.exporter.otlp.proto.http._log_exporter import OTLPLogExporter github_token = os.environ["GITHUB_TOKEN"] resource = Resource(attributes={ "service.name": "opentelemetry-instrumentation-azure-ai-inference" }) provider = TracerProvider(resource=resource) otlp_exporter = OTLPSpanExporter( endpoint="http://localhost:4318/v1/traces", ) processor = BatchSpanProcessor(otlp_exporter) provider.add_span_processor(processor) trace.set_tracer_provider(provider) logger_provider = LoggerProvider(resource=resource) logger_provider.add_log_record_processor( BatchLogRecordProcessor(OTLPLogExporter(endpoint="http://localhost:4318/v1/logs")) ) _events.set_event_logger_provider(EventLoggerProvider(logger_provider)) from azure.ai.inference.tracing import AIInferenceInstrumentor AIInferenceInstrumentor().instrument() ### Set up for OpenTelemetry tracing ### from azure.ai.inference import ChatCompletionsClient from azure.ai.inference.models import UserMessage from azure.ai.inference.models import TextContentItem from azure.core.credentials import AzureKeyCredential client = ChatCompletionsClient( endpoint = "https://models.inference.ai.azure.com", credential = AzureKeyCredential(github_token), api_version = "2024-08-01-preview", ) response = client.complete( messages = [ UserMessage(content = [ TextContentItem(text = "hi"), ]), ], model = "gpt-4.1", tools = [], response_format = "text", temperature = 1, top_p = 1, ) 打印(响应.choices[0].message.content)

-

-

运行代码

-

在Visual Studio Code中打开一个新的终端。

-

在终端中,使用命令运行代码

python main.py输入:.

-

-

检查AI工具包中的跟踪数据

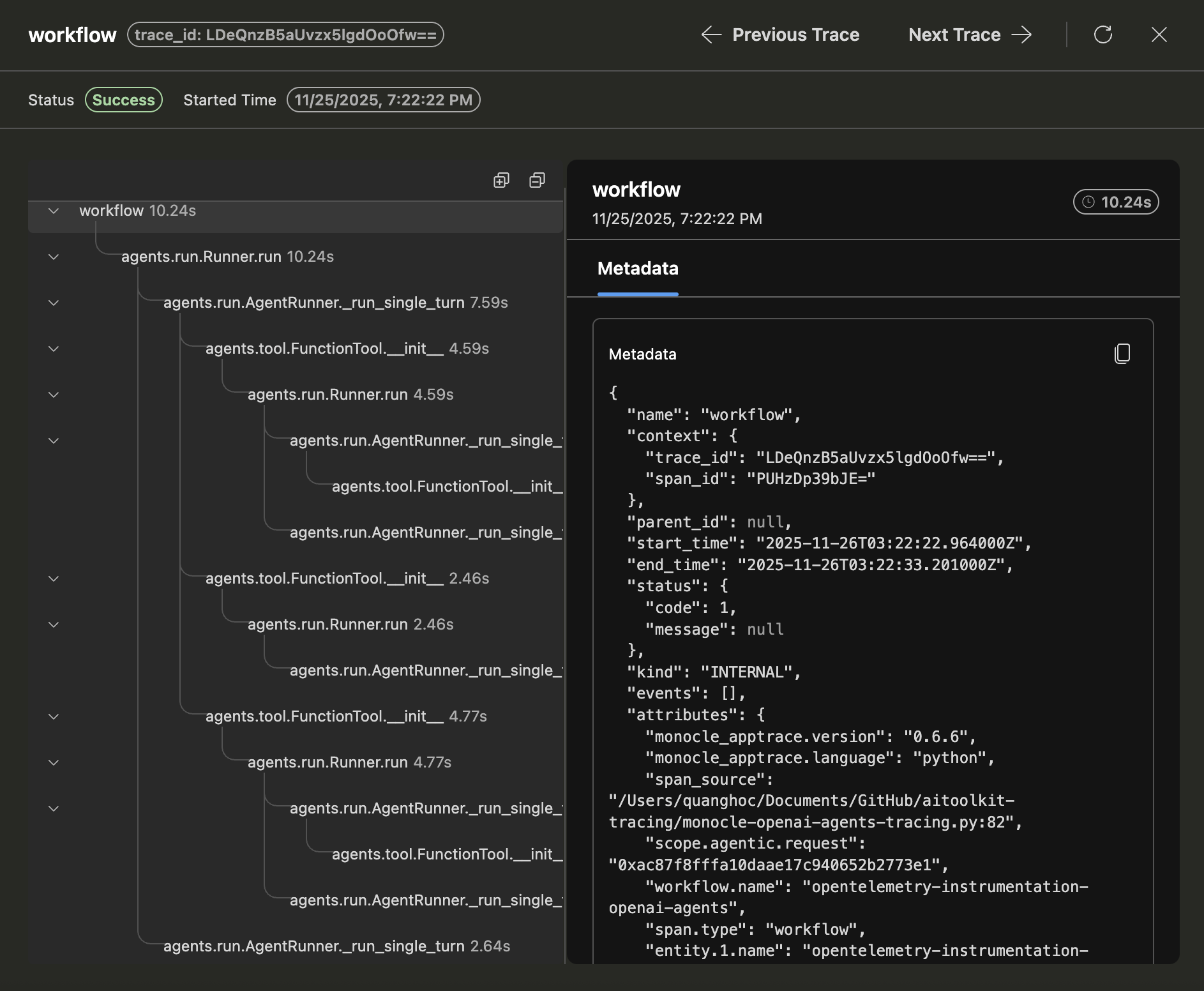

运行代码并刷新跟踪网页视图后,列表中会有一个新的跟踪记录。

选择跟踪以打开跟踪详细信息网页视图。

在左侧的跨度树视图中查看您的应用程序的完整执行流程。

在右侧跨度详细信息视图中选择一个跨度,以在输入 + 输出标签中查看生成式AI消息。

选择元数据标签以查看原始元数据。

示例 2:使用 Monocle 通过 OpenAI Agents SDK 设置跟踪

以下端到端示例使用Python中的OpenAI Agents SDK与Monocle一起,展示了如何为多智能体旅行预订系统设置跟踪。

先决条件

要运行此示例,您需要以下前提条件:

- 视觉工作室代码

- AI 工具包扩展

- 奥卡胡步道可视化工具

- OpenAI 代理 SDK

- 开放可观测性

- 单眼镜

- Python 最新版本

- OpenAI API 密钥

设置开发环境

请按照以下说明部署包含所有运行此示例所需依赖项的预配置开发环境。

-

创建环境变量

使用以下代码片段之一为您的 OpenAI API 密钥创建一个环境变量。替换

<你的OpenAI API密钥>使用您的实际 OpenAI API 密钥。bash:

导出 OPENAI_API_KEY="<你的-openai-api-key>"PowerShell:

$Env:OPENAI_API_KEY="<你的-openai-api-key>"Windows 命令提示符:

设置 OPENAI_API_KEY=<你的-openai-api-key>或者,创建一个

环境变量文件在你的项目目录中创建文件:OPENAI_API_KEY=<你的-openai-api-key> -

安装Python包

创建一个

要求.txt文件包含以下内容:opentelemetry-sdk opentelemetry-exporter-otlp-proto-http 单眼应用跟踪 openai-agents python-dotenv使用以下命令安装软件包:

pip install -r requirements.txt -

设置跟踪

-

在你的电脑上为项目创建一个新的本地目录。

创建目录 my-agents-tracing-app -

导航到你创建的目录。

cd 我的代理追踪应用程序 -

在该目录中打开Visual Studio Code:

代码 .

-

-

创建Python文件

-

在

我的代理追踪应用程序目录,创建一个名为的Python文件main.py输入:.您将添加代码以通过Monocle设置跟踪,并与OpenAI Agents SDK进行交互。

-

将以下代码添加到

main.py并保存文件:导入 os 从 dotenv 导入 load_dotenv # 从 .env 文件加载环境变量 load_dotenv() 从 opentelemetry.sdk.trace.export 导入 BatchSpanProcessor 从 opentelemetry.exporter.otlp.proto.http.trace_exporter 导入 OTLPSpanExporter # 导入monocle_apptrace 从 monocle_apptrace 导入 setup_monocle_telemetry # Setup Monocle telemetry with OTLP span exporter for traces setup_monocle_telemetry( workflow_name="opentelemetry-instrumentation-openai-agents", span_processors=[ BatchSpanProcessor( OTLPSpanExporter(endpoint="http://localhost:4318/v1/traces") ) ] ) from agents import Agent, Runner, function_tool # Define tool functions @function_tool def book_flight(from_airport: str, to_airport: str) -> str: """Book a flight between airports.""" return f"Successfully booked a flight from {from_airport} to {to_airport} for 100 USD." @function_tool def book_hotel(hotel_name: str, city: str) -> str: """Book a hotel reservation.""" return f"Successfully booked a stay at {hotel_name} in {city} for 50 USD." @function_tool def get_weather(city: str) -> str: """Get weather information for a city.""" return f"The weather in {city} is sunny and 75°F." # Create specialized agents flight_agent = Agent( name="Flight Agent", instructions="You are a flight booking specialist. Use the book_flight tool to book flights.", tools=[book_flight], ) hotel_agent = Agent( name="Hotel Agent", instructions="You are a hotel booking specialist. Use the book_hotel tool to book hotels.", tools=[book_hotel], ) weather_agent = Agent( name="Weather Agent", instructions="You are a weather information specialist. Use the get_weather tool to provide weather information.", tools=[get_weather], ) # Create a coordinator agent with tools coordinator = Agent( name="Travel Coordinator", instructions="You are a travel coordinator. Delegate flight bookings to the Flight Agent, hotel bookings to the Hotel Agent, and weather queries to the Weather Agent.", tools=[ flight_agent.as_tool( tool_name="flight_expert", tool_description="Handles flight booking questions and requests.", ), hotel_agent.as_tool( tool_name="hotel_expert", tool_description="Handles hotel booking questions and requests.", ), weather_agent.as_tool( tool_name="weather_expert", tool_description="Handles weather information questions and requests.", ), ], ) # Run the multi-agent workflow if __name__ == "__main__": import asyncio result = asyncio.run( Runner.run( coordinator, "为我预订今天从SEA到SFO的航班,然后预订那里的最佳酒店并告诉我天气情况。", ) ) print(result.final_output)

-

-

运行代码

-

在Visual Studio Code中打开一个新的终端。

-

在终端中,使用命令运行代码

python main.py输入:.

-

-

检查AI工具包中的跟踪数据

运行代码并刷新跟踪网页视图后,列表中会有一个新的跟踪记录。

选择跟踪以打开跟踪详细信息网页视图。

在左侧面板树视图中查看您的应用程序的完整执行流程,包括代理调用、工具调用和代理委托。

在右侧跨度详细信息视图中选择一个跨度,以在输入 + 输出标签中查看生成式AI消息。

选择元数据标签以查看原始元数据。

你所学到的

在本文中,您将学习如何:

- 使用 Azure AI 推理 SDK 和 OpenTelemetry 在你的 AI 应用程序中设置跟踪。

- 配置OTLP跟踪导出器将跟踪数据发送到本地收集服务器。

- 运行您的应用程序以生成跟踪数据,并在AI Toolkit网页视图中查看跟踪数据。

- 使用带有多个 SDK 和语言(包括 Python 和 TypeScript/JavaScript)以及通过 OTLP 使用非 Microsoft 工具的跟踪功能。

- 使用提供的代码片段,测试各种AI框架(Anthropic、Gemini、LangChain、OpenAI等)。

- 使用跟踪网页视图界面,包括启动收集器和刷新按钮来管理跟踪数据。

- 设置开发环境,包括环境变量和包安装,以启用跟踪。

- 使用生成树和详细视图分析你的应用执行流程,包括生成式AI消息流和元数据。